How we leverage automated quality assurance to build a best-in-class product at speed

Quality Assurance (QA) encompasses processes and tools to ensure that a product meets quality standards. This is a crucial practice for building any sophisticated software at speed and with superior quality.

Leapsome is a performance management and engagement platform that covers areas as complex as goals, performance reviews, engagement surveys, and learning — all in a highly flexible way. To allow our relatively small team to keep building a best-in-class product at speed, we automate our QA processes as much as possible.

Guiding principles

To make certain that we keep building quality software at scale, we focus our QA around the following key concepts:

- Short feedback loop

Tests should run fast enough that we promptly know if cases pass or fail.

- High level of confidence

Tests should give engineers significant confidence that if a case passes, the code won’t unexpectedly break in production.

- Robust

Tests shouldn't continuously break if they’re unrelated to code changes (dates, race conditions, etc.). Engineers shouldn’t spend their time fixing tests.

- Loosely coupled to implementation details

Whenever possible, tests shouldn’t be tightly coupled to implementation details.

Tests should ultimately bring value to the product by securing its quality without sacrificing the team’s speed — perhaps even increasing it. Adding, changing, or refactoring features shouldn’t incur the double cost of writing the code and fixing tests.

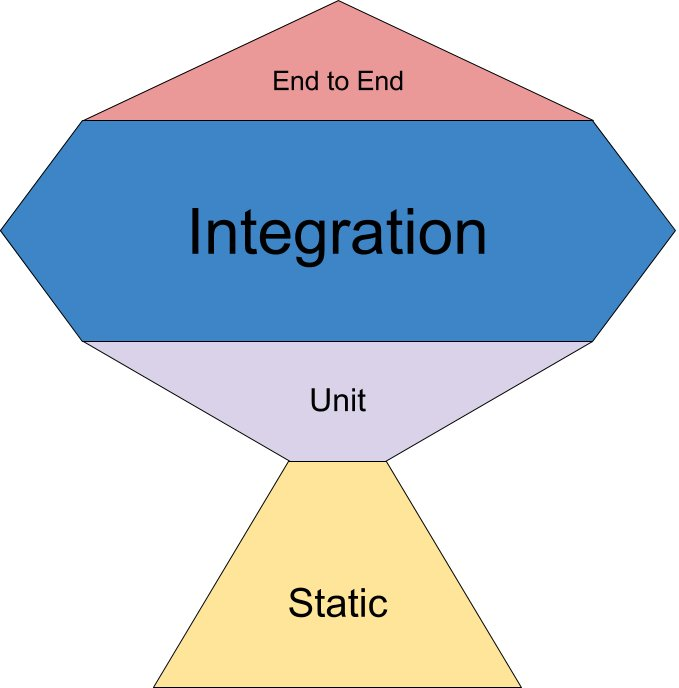

To achieve this goal, and inspired by Kent C. Dodds’ “Testing Trophy” method, we’ve implemented the following steps within our QA pipeline:

Linting

Linting flags suspicious constructs (potential bugs) within the code. This is the very first tool that helps us achieve bug-free code, as it usually identifies basic mistakes and is fast enough to be run as a pre-commit hook.

Linting can also be used to flag stylistic errors, but we prefer working with a code formatting tool instead (in this case, Prettier). On this account, our linting only focuses on code constructs.

Linting is first performed as a pre-commit hook, then as a step of our Continuous Integration (CI) pipeline. As our stack is based on JavaScript, we run our linting for both server and client code using ESLint.

Locale tests

As our platform is available in multiple languages (currently English, German, and French), we must always translate new strings into each language.

Locale tests guarantee that we have the necessary translation keys in all our supported languages. A custom script parses translation files and compares them to the original version to ensure that there are no missing or extra keys.

This is performed first as a pre-commit hook, then as part of our CI pipeline.

Unit tests (server code)

Unit tests analyze the accuracy of a given function (“is the output as expected, given a certain input?”). We believe that unit tests only make sense for a system's main building blocks — accordingly, we only unit-test distinct, non-trivial helper functions used in our server code.

Here at Leapsome, we unit-test specific helper functions (such as our backend framework) that envelop non-trivial logic to certify that we can safely build our business logic upon them. We believe that unit tests should represent a tiny fraction of our testing suite. Most of the business logic should be covered as integration tests.

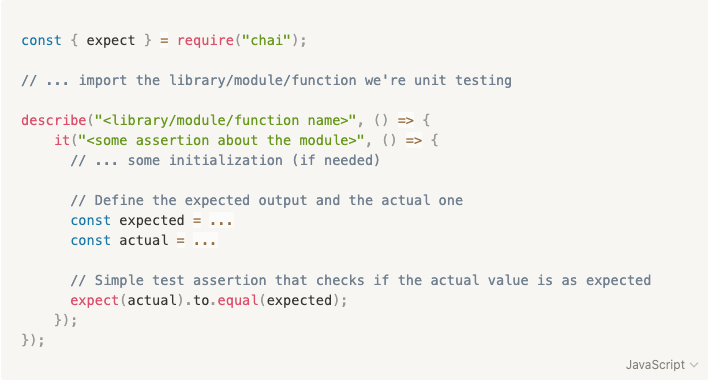

Unit tests are run using mocha, with chai as the assertion library.

When possible, unit tests should only examine one single function and mock any side effects.

A good unit test should answer to these questions:

- What is the unit under test (module, function, class, whatever)?

- What should it do?

- What was the actual output?

- What was the expected output?

- How do you reproduce the failure?

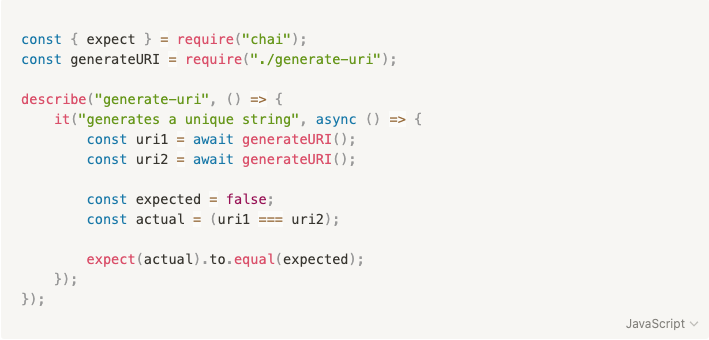

As an example, we have a function in our code that generates unique, hard-to-guess identifiers for some of our resources. We can write unit tests for this function as follows:

Unit tests (client code)

The prior definition of unit tests for the server code holds true for the client code.

In this case, we unit-test routes, stores, helper functions (utils), and components that form the foundation of our user interface (UI). Unit tests should firmly assure that our UI’s building blocks are solid.

More often than not, we try to test functions in isolation by mocking external dependencies (such as the router or the store). In rare cases, we also do snapshot testing for presentational components.

We try to minimize the use of snapshot tests — as they are, by definition, tightly coupled with implementation details.

Only simple presentational components should be snapshot-tested, on top of more traditional test assertions.

Unit tests are run jest and vue-test-utils.

On the client side, unit tests can take various forms:

- Helper functions are tested similarly to server-side unit tests;

- Routes are schema-validated (making sure they exist within the expected parameters);

- Stores are tested mocking API calls where needed;

- Components are shallow-mounted or snapshot-tested (we might also mock routes, props, and store if necessary).

When asserting DOM elements in components, we reference them via data-test-* attributes as not to couple the test with the actual DOM element or other attributes that might potentially change (such as class or id).

Most of our client code unit tests follow the Vue Testing Handbook’s guidelines.

Integration tests

Unit tests are great for testing functions in isolation. Yet, as software becomes more complex, mocking side effects lowers the confidence that every piece will work as expected in production.

A better approach to holistically test a complex software application involves writing integration tests. Instead of looking only at a single function's output, we also (and mostly) test the side effects of intricate pieces of code.

When we talk about integration tests, we look exclusively at anything that happens on the server side, from the API endpoints onward (handler, business logic, database).

We’re neither testing the API layer, nor the integration with the client (covered by end-to-end tests).

Integration tests allow for a high degree of confidence with a low level of code coupling. This makes it easier to refactor and further develop the code while ensuring it still performs as expected. It also guarantees that the business logic in the backend is correct while providing enough flexibility for growth and refactoring.

Testing side effects, in this case, means that our business logic generates and manipulates the right data, as we mostly look into the database as a side effect of handler functions.

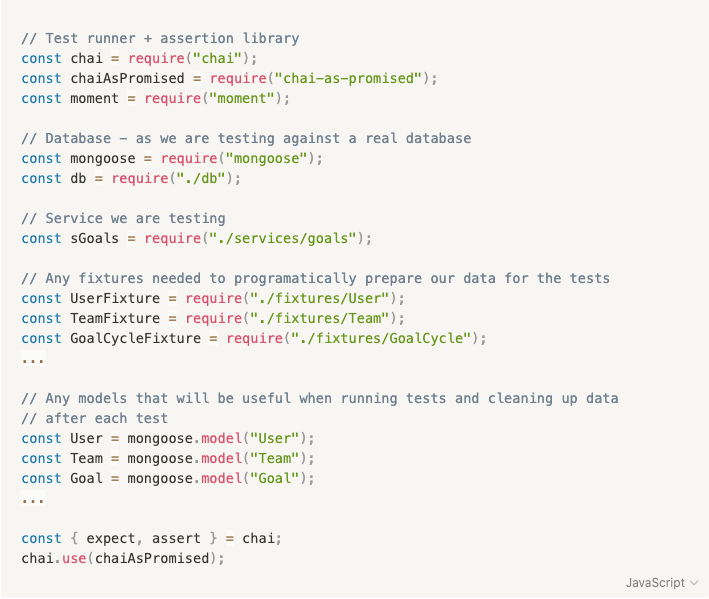

Like unit tests, integrations tests are run using mocha (plus chai as the assertion library).

To secure a clean test environment, we run integration tests on Docker against a clean MongoDB instance. To facilitate the programmatic creation of data, we provide fixtures in the /fixtures folder that can feed on templates (located in the /template folder).

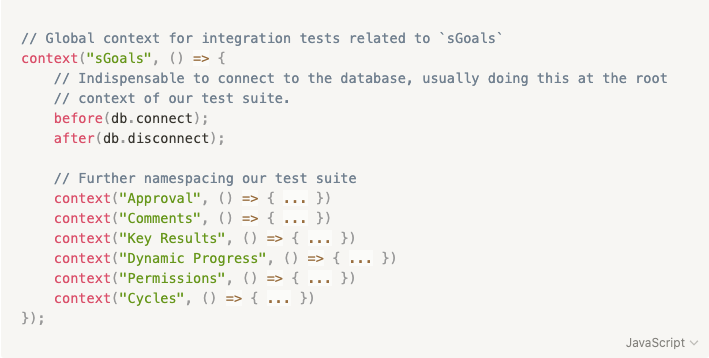

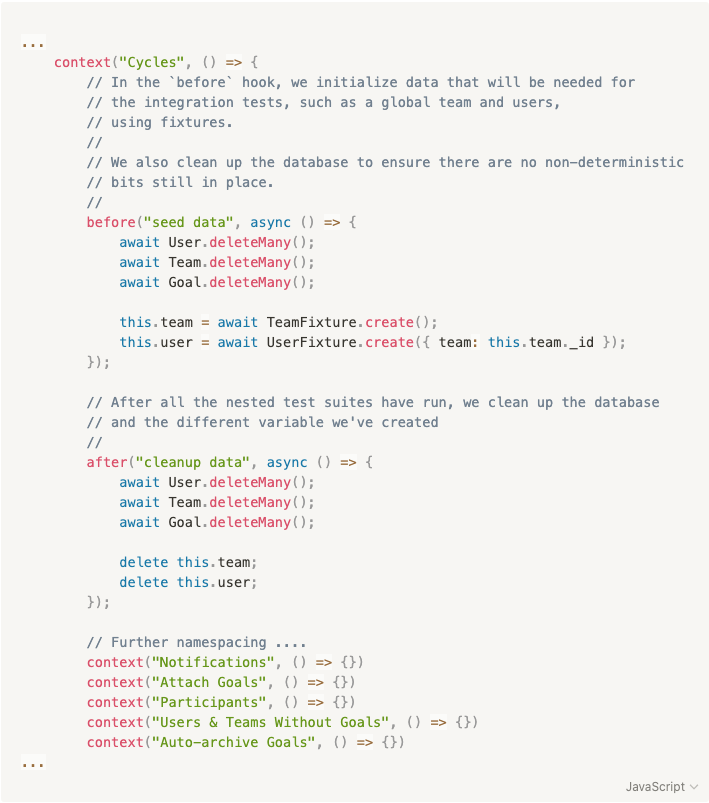

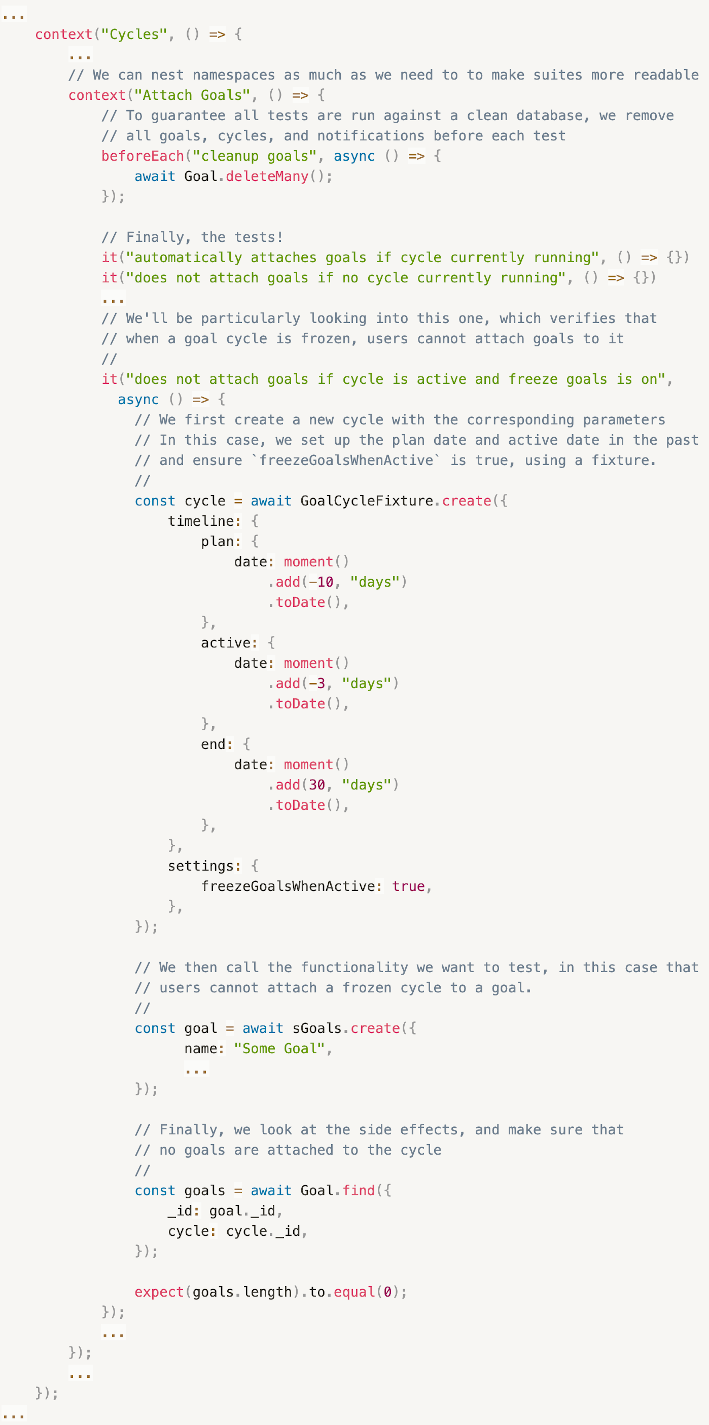

As an example, let’s take a look at how we test our Goal Cycle functionality:

- Imports

- Global setup

- Initialization for Goal Cycles

- Test cases

Integration tests really shine when testing use cases. In this instance, we'll verify that when a goal cycle is frozen, users cannot attach it to newly created goals.

End-to-end tests

While integration tests look at everything that happens beyond the API endpoint (handler, database), end-to-end tests take an even more holistic approach and examine the product from a user’s perspective.

In this case, end-to-end tests won’t focus on testing the UI. They’ll be more functional tests, making sure that the product behaves as expected for the user.

Unit tests ensure that a function returns the expected output; integration tests check if handlers produce the expected side effects; end-to-end tests verify that the product holistically behaves as expected from a user’s standpoint.

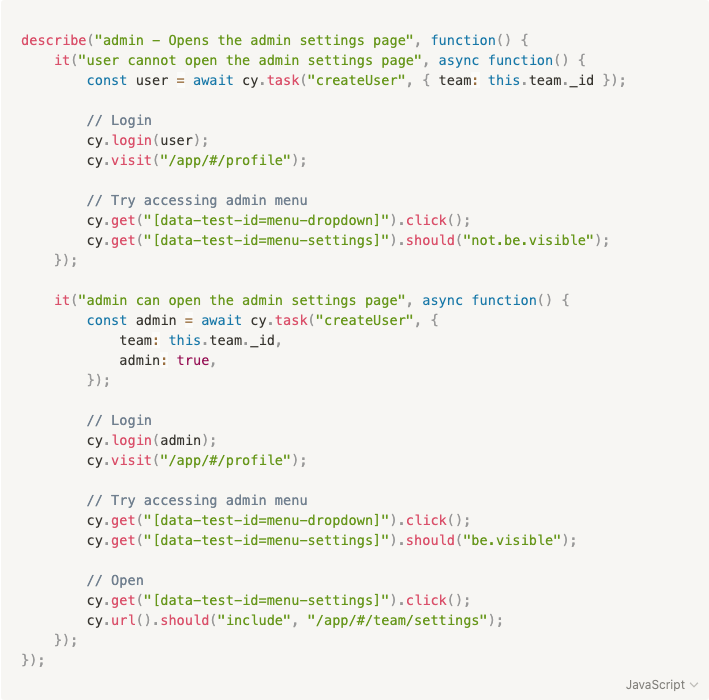

We run end-to-end tests on Cypress.

For a clean test environment, each suite works against a newly minted account.

To do so, we use the following command as a beforeEach and afterEach hook globally from support/index.js:

We use tasks to help us write more idiomatic tests. As an example, to create users in a test suite, we employ a createUser task:

To guarantee that our end-to-end tests are not tightly coupled to the UI, we exclusively use data-test-id attributes to select DOM elements.

Manual tests

Even though we aim for as much automation as possible, nothing beats booting up the product locally and manually testing the new functionalities we’ve built! :)

As you can see, we take QA seriously at Leapsome!

Every technical endeavor we undertake is geared toward achieving our purpose of making work more fulfilling for everyone, and being able to build high-quality features rapidly aligns with that purpose.

— by the way, we’re hiring!

Ready to upgrade your people enablement strategy?

Explore our performance reviews, goals & OKRs, engagement surveys, onboarding and more.

.webp)

.webp)

Request a Demo Today

Request a Demo Today

.png)

.png)